Multi-Touch Attribution Models: How to Choose the Right One

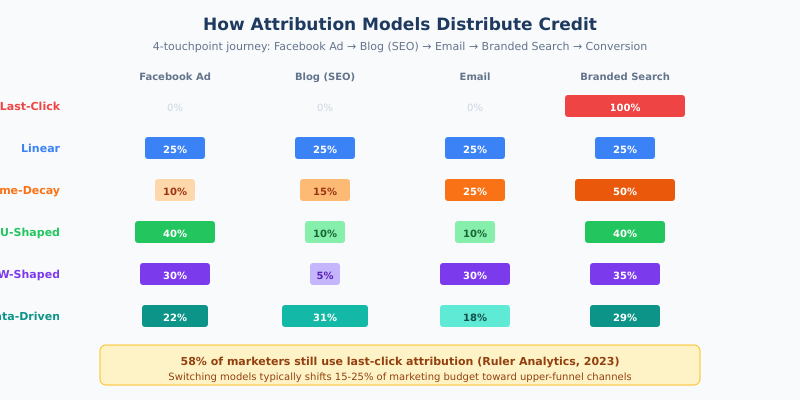

58% of marketers still use last-click attribution. That means more than half the industry gives 100% of conversion credit to the final touchpoint — ignoring every ad impression, blog visit, and email that brought the customer there. It’s like crediting the cashier for the entire sale while ignoring the marketing that got the customer through the door.

Multi-touch attribution distributes credit across all touchpoints in the customer journey. But with five major models to choose from, plus data-driven alternatives, picking the right one isn’t obvious. The wrong model doesn’t just give you bad reports — it actively misallocates your marketing budget. Here’s how each model works, when to use it, and where they all fall short.

Why Does Single-Touch Attribution Fail?

The average B2C purchase involves 4-6 touchpoints. B2B enterprise deals involve 15-30+. Google’s own research found that the typical consumer journey spans at least 2.4 different channel categories, and Forrester reports that B2B buyers engage with an average of 13 content pieces before deciding.

Last-click attribution gives 100% credit to the final interaction. This systematically overvalues bottom-of-funnel channels (branded search, retargeting, direct) and undervalues awareness channels (display, social, content). When budgets follow this model, marketers defund the very channels that feed their pipeline — a death spiral where cutting awareness spend eventually starves the conversions that branded search depends on.

We explored this problem in depth in our analysis of why last-click attribution misleads marketers. The core issue: every single-touch model creates a false binary, treating a multi-step journey as a single moment.

How Do Multi-Touch Attribution Models Distribute Credit?

Each model answers the same question differently: “Which touchpoints deserve credit for this conversion?” Here’s how they distribute credit across a typical 4-touchpoint journey (Facebook Ad → Blog via SEO → Email → Branded Search → Conversion):

Linear Attribution

Splits credit equally across all touchpoints. In a 4-touch journey, each gets 25%.

Advantage: Simple, democratic. No touchpoint is invisible. Good as a baseline when you’re first moving beyond last-click.

Disadvantage: Treats a random display impression the same as a high-intent demo request. A quick banner view doesn’t deserve the same credit as a 20-minute product evaluation.

Best for: Teams new to multi-touch attribution who want a simple starting point.

Time-Decay Attribution

Uses a half-life function — touchpoints closer to conversion get exponentially more credit. With a common 7-day half-life, a touchpoint 7 days before conversion gets 50% of the credit of a conversion-day touchpoint, 14 days out gets 25%, and so on.

Advantage: Reflects the recency effect in purchase decisions. The touchpoints that pushed the customer over the line get more credit.

Disadvantage: Systematically undervalues brand-building and awareness campaigns. A brilliant top-of-funnel ad that planted the seed three weeks ago gets almost no credit.

Best for: Short sales cycles, e-commerce, promotion-driven businesses where recency genuinely matters.

Position-Based (U-Shaped) Attribution

Gives 40% to the first touch, 40% to the last touch, and splits the remaining 20% among middle interactions.

Advantage: Recognizes that both discovery and closing matter most. The channel that introduced the customer and the one that sealed the deal get premium credit.

Disadvantage: The 40/20/40 split is arbitrary. And it doesn’t account for critical middle moments like lead creation or product demos.

Best for: B2C businesses with moderate sales cycles. A solid upgrade from last-click for most teams.

W-Shaped Attribution

Extends U-shaped by adding a third key moment: the lead-creation touchpoint (form fill, signup, trial start). Assigns ~30% each to first touch, lead creation, and close — with ~10% split among remaining touchpoints.

Advantage: Better for B2B where lead creation is a distinct, valuable event. Captures the full funnel: awareness → lead → close.

Disadvantage: Requires clearly defining what “lead creation” means. More complex to implement and explain to stakeholders.

Best for: B2B SaaS, businesses with defined lead-creation stages, and longer sales cycles.

Data-Driven Attribution (Algorithmic)

Uses machine learning (typically Shapley value calculations) to analyze all converting and non-converting paths, then assigns fractional credit based on each touchpoint’s actual incremental impact on your specific data.

Advantage: No arbitrary rules — adapts to your real customer behavior. The most accurate model when you have sufficient data.

Disadvantage: Requires significant data volume (Google recommends 300+ conversions and 3,000+ ad interactions monthly). Black-box nature makes it hard to explain why a channel received specific credit.

Best for: Mature organizations with high conversion volume. GA4 now uses this as the default.

How Does Google’s Data-Driven Attribution Work in GA4?

GA4 made data-driven attribution its default model in November 2023, replacing last-click for all new properties. It uses a Shapley value algorithm that examines converting and non-converting paths, calculating each touchpoint’s marginal contribution.

Key limitations to know:

- Minimum thresholds: Below 300 conversions per month, GA4 falls back to rules-based models. The data-driven model needs volume to be reliable.

- Walled garden problem: GA4’s DDA only sees touchpoints Google can observe. It can’t credit offline channels (TV, radio, events), competitor interactions, or touchpoints on Meta or TikTok.

- Lookback windows: Default 30 days for acquisition, 90 days for other conversions. Touchpoints outside the window get zero credit — problematic for B2B with 6+ month sales cycles.

- Black box: You can’t inspect the model weights or understand why specific credit was assigned. This makes it hard to challenge or validate.

GA4’s DDA is a good starting point, but it shouldn’t be your only measurement tool. Its blind spots — especially around offline channels and cross-platform journeys — mean it systematically overcredits Google’s own ecosystem.

What’s the Difference Between MTA, MMM, and Incrementality Testing?

Multi-touch attribution is one of three measurement approaches. Each answers a different question:

| Approach | What It Answers | Data Required | Privacy Impact | Best For |

|---|---|---|---|---|

| Multi-Touch Attribution | Which touchpoints contributed to this conversion? | User-level clickstream data | High (needs tracking) | Digital channel optimization |

| Media Mix Modeling | How should I allocate budget across channels? | 2-3 years of aggregate spend/outcome data | Minimal (aggregate data) | Strategic budget planning |

| Incrementality Testing | Did this campaign actually cause these conversions? | Controlled experiment (holdout groups) | Moderate (cohort-level) | Validating channel effectiveness |

The triangulation approach: Leading organizations now use all three together — MMM for strategic allocation, MTA for tactical optimization, and incrementality tests for validation. This is especially important because MTA tells you what touchpoints were present in converting paths, not which ones caused the conversion. Studies consistently show that 30-50% of retargeting-attributed conversions are non-incremental — the user would have converted anyway.

MMM is experiencing a renaissance because it works on aggregate data — no cookies or user-level tracking needed. Google’s open-source Meridian and Meta’s Robyn have made MMM accessible to mid-market teams, not just enterprises with data science departments.

How Do Privacy Changes Affect Attribution?

Multi-touch attribution relies on tracking individual users across touchpoints. That’s getting harder:

- Safari and Firefox block third-party cookies (~30-35% of browser market share)

- Apple’s App Tracking Transparency means 65-75% of iOS users opt out of tracking

- GDPR consent rates in the EU average 40-55%, leaving roughly half of European users invisible to MTA

The result: MTA models trained on observed data become increasingly biased toward trackable segments — primarily desktop Chrome users who consent to cookies. This isn’t a representative sample.

The adaptations that matter: server-side tracking to maintain first-party data collection, enhanced conversions APIs to match conversions without cookies, and first-party data strategies built on direct customer relationships. For broader context on how the cookieless landscape is reshaping measurement, see our dedicated guide.

Which Attribution Model Should You Choose?

| Your Situation | Recommended Model | Why |

|---|---|---|

| Under 1,000 monthly conversions | Position-Based (U-Shaped) | Simple, credits both discovery and close, easy to explain to stakeholders |

| 1,000-10,000 monthly conversions | GA4 Data-Driven + quarterly incrementality tests | Enough data for algorithmic model; tests validate the model’s output |

| B2B with long sales cycles | W-Shaped or Data-Driven | Captures lead-creation moment; accounts for 3-12 month journeys |

| E-commerce with short cycles | Time-Decay or Data-Driven | Recency matters for impulse/promotion-driven purchases |

| Enterprise with offline channels | MMM + MTA + Incrementality (triangulation) | Only way to measure TV, radio, OOH alongside digital |

Universal advice: Never rely on a single attribution model. Compare at least two models side by side. Channels where credit changes dramatically between models are your highest-uncertainty areas — and your best candidates for incrementality testing.

Common Mistakes When Implementing Attribution

Mistake 1: Choosing a Model That Doesn’t Match Your Sales Cycle

Time-decay works poorly for B2B enterprise (6+ month cycles) where early-stage awareness touchpoints are crucial. Position-based works poorly for impulse purchases where there’s essentially one meaningful touchpoint.

Fix: Map your average customer journey length and touchpoint count before selecting a model. If your typical journey is 15+ touchpoints over 3+ months, linear or position-based models are too crude.

Mistake 2: Confusing Correlation With Causation

MTA tells you which touchpoints were present in converting paths — not which ones caused the conversion. Branded search appears in almost every converting path, but removing it would likely just shift clicks to organic brand results, not reduce conversions.

Fix: Use incrementality tests for your top channels. Run holdout experiments to measure whether conversions actually decrease when you pause a channel — that’s the true test of causation.

Mistake 3: Ignoring Non-Trackable Touchpoints

MTA only credits what it can measure. Podcasts, word-of-mouth, in-store experiences, and offline advertising are invisible. This systematically overcredits digital channels, creating a biased picture that distorts budget allocation.

Fix: Supplement MTA with Media Mix Modeling for a holistic view that includes offline channels. At minimum, acknowledge the limitation when presenting attribution data to stakeholders.

Mistake 4: Insufficient Data Volume for Data-Driven Models

Implementing algorithmic attribution without enough conversions produces noisy, unreliable results. Below 300-500 monthly conversions, data-driven models don’t have enough signal to separate real patterns from random noise.

Fix: If you’re below the threshold, start with rules-based models (U-shaped or linear) and switch to data-driven once you have volume. GA4 automatically falls back when data is insufficient.

Mistake 5: Treating Attribution as a One-Time Project

Attribution requires ongoing calibration. Customer behavior shifts, channel mix evolves, and market conditions change. A model calibrated last year may be producing misleading results today.

Fix: Review attribution model performance quarterly. Compare model outputs against incrementality test results. If the model says a channel is high-value but incrementality tests show low lift, recalibrate.

Continue Learning

Attribution is deeply connected to how you measure and optimize your marketing:

- Last Click Attribution: Why It’s Misleading — the detailed case against single-touch models

- Customer Journey Analysis — how to map the touchpoints that attribution models evaluate

- Conversion Funnel Analysis — find where customers drop off between touchpoints

- Cookieless Tracking — how privacy changes reshape attribution data

- 18 Marketing KPIs Worth Tracking — which metrics attribution models should inform

Bottom Line

Multi-touch attribution is a lens, not a source of truth. Every model has blind spots — linear over-simplifies, time-decay undervalues awareness, and data-driven models are black boxes that require high volume to be reliable.

The right approach depends on your data maturity. Start with U-shaped if you’re moving beyond last-click. Graduate to GA4’s data-driven model once you have 300+ monthly conversions. And regardless of which model you use, validate it with incrementality tests — because the only way to know if a channel truly drives conversions is to measure what happens when you turn it off.

Companies that switch from last-click to multi-touch attribution reallocate an average of 15-25% of their marketing budget within the first year. That reallocation — typically toward upper-funnel channels — is the real ROI of getting attribution right.